The rise of artificial intelligence has created a new breed of data center—one that demands unprecedented levels of power, cooling, and most critically, network performance. Unlike traditional data centers that handle everyday computing tasks, AI data centers are purpose-built facilities for training and running large-scale machine learning models.

Understanding what makes these facilities unique helps explain why network infrastructure has become the make-or-break factor in AI success.

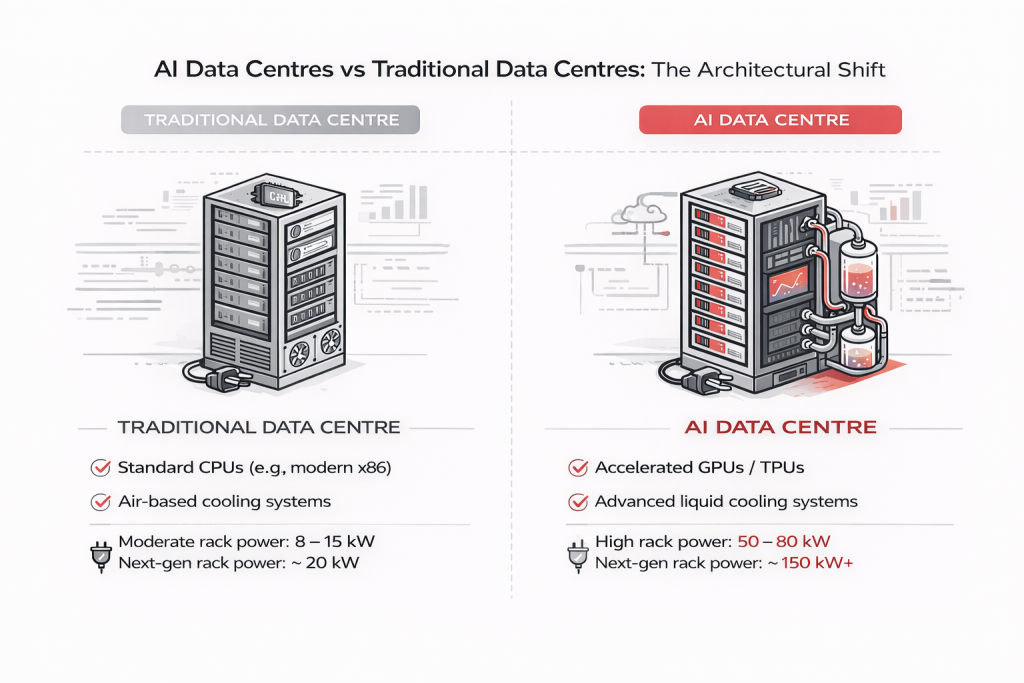

Traditional data centers are generalists. They run business applications, host websites, store databases, and handle email servers. Each task operates independently with moderate resource demands.

AI data centers are specialists built for one primary function: training and running neural networks at massive scale. This singular focus reshapes everything from processor choice to building design.

The fundamental shift starts with processors.

Traditional data centers rely on CPUs designed to handle diverse, sequential tasks efficiently—perfect for running varied business applications.

AI data centers are built around GPUs (Graphics Processing Units) and specialized AI accelerators like TPUs. These chips contain thousands of simple cores working in parallel, ideal for the repetitive mathematical operations required in machine learning.

Training an AI model requires massive parallel processing. Where a CPU handles tasks sequentially, a GPU operates like thousands of workers performing identical operations simultaneously.

Here’s where AI data centers diverge completely from traditional thinking.

Servers communicate occasionally. Standard Ethernet handles sporadic, low-volume traffic between independent systems.

Thousands of GPUs must operate as a single unified system. When training large models, processors constantly synchronize their work, exchanging terabytes of data with near-zero latency tolerance.

Even milliseconds of delay cascade into hours of wasted training time. This demands:

Without proper network infrastructure, multi-million dollar GPU clusters operate at a fraction of their potential.

AI computation generates extreme heat. Standard server racks draw 5-10 kilowatts. AI-optimized racks consume 50-100 kilowatts—equivalent to powering 20 homes from a single cabinet.

Air cooling cannot handle this density. Modern AI facilities use:

This physical complexity demands careful planning and monitoring to maintain optimal performance.

AI training consumes massive datasets—petabytes of images, text, and video. Unlike traditional databases, AI storage operates like a high-speed conveyor belt delivering continuous data streams to thousands of GPU workers.

Success requires:

The bottleneck isn’t finding data—it’s moving it fast enough to keep GPUs fully utilized.

AI data centers aren’t retrofitted warehouses. They’re industrial facilities designed from the ground up:

Managing AI data center networks presents unique challenges:

Scale: Thousands of 400G/800G fiber connections between GPU clusters, storage, and control systems.

Interdependence: Systems are tightly coupled. Network degradation directly impacts GPU utilization and training efficiency.

Performance Sensitivity: AI workloads expose network issues that traditional applications tolerate.

Rapid Evolution: Infrastructure changes constantly as new GPU generations and networking technologies emerge.

Organizations need robust network infrastructure capable of supporting these demanding workloads while maintaining visibility and control.

Current AI models are limited by available infrastructure. Future demands will intensify:

With higher network bandwidth requirements triggered by AI, bandwidth consumption for broadband is set to surge higher. With higher bandwidth requirements, ISPs and telcos need to handle increased loads on their BSS and Logging solutions. Jaze ISP Manager provides a scalable architecture to adopt to increased loads on their infrastructure without incurring significant hardware investment. Click here to discover how Jaze ISP Manager supports a scalable architecture for large scale ISP networks. Click here to learn more.